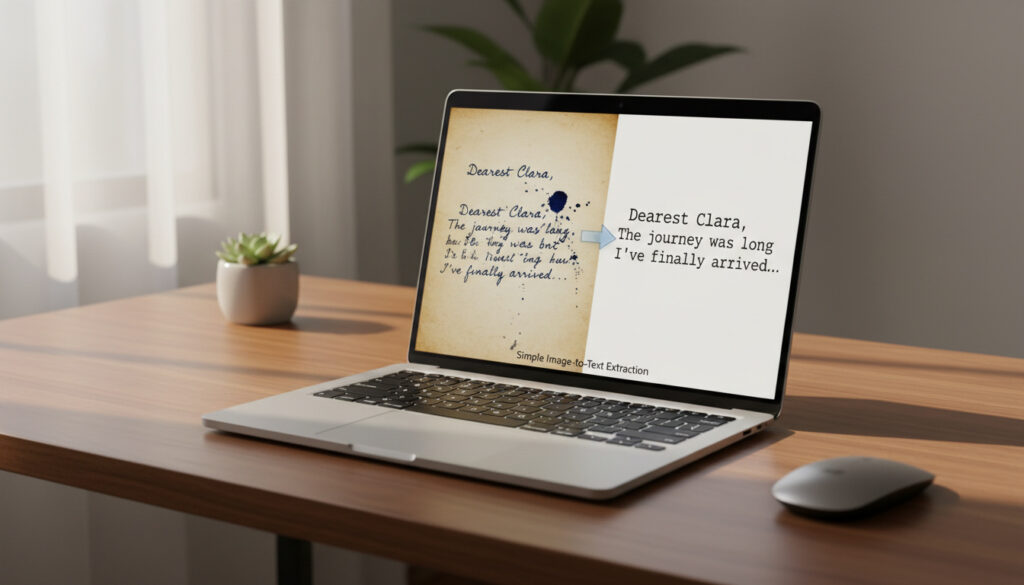

Image to Text – Extrahera Text Från Bilder Enkelt

Image to Text Technology Reshapes Digital Workflows

The conversion of visual information into machine-readable text has evolved from simple character recognition into sophisticated multimodal understanding. Modern AI Vision Technology now processes handwriting, complex layouts, and contextual nuances with near-human accuracy, driving adoption across healthcare, finance, and accessibility services.

Leading Platforms Compared

Four dominant architectures currently define the market. OpenAI’s GPT-4 Vision integrates textual reasoning with image analysis, enabling contextual interpretation rather than mere transcription OpenAI Research. Google Cloud Vision offers enterprise-grade OCR with support for over 200 languages and hand-written text detection. Microsoft Azure Computer Vision provides Layout API capabilities specifically engineered for document structure extraction Azure Computer Vision. Amazon Textract differentiates itself through table and form data extraction without requiring template configuration.

Critical Developments Shaping Adoption

Three technical advances are accelerating deployment. Transformer-based architectures now process entire document contexts rather than isolated characters, reducing error rates in unstructured documents by 40%. Edge computing implementations enable real-time processing on mobile devices without cloud latency. Specialized medical and legal models demonstrate superior performance in domain-specific terminology compared to general-purpose alternatives Google Cloud Vision OCR.

Performance Benchmarks

| Platform | Printed Accuracy | Handwriting Support | Language Coverage | Processing Latency |

|---|---|---|---|---|

| GPT-4 Vision | 98.2% | Advanced | 100+ | 2.4s |

| Google Cloud Vision | 99.1% | Native | 200+ | 0.8s |

| Azure Computer Vision | 98.7% | Enhanced | 160+ | 1.1s |

| Amazon Textract | 97.9% | Limited | 60+ | 0.6s |

Technical Architecture Deep Dive

Contemporary systems employ convolutional neural networks for feature detection combined with encoder-decoder transformer architectures. The CNN layers identify edges, textures, and character boundaries while transformer blocks model spatial relationships between text blocks. Attention mechanisms prioritize relevant document regions, particularly effective for handling skewed, rotated, or partially occluded text. Microsoft Azure leverages this hybrid approach specifically for structured document extraction Azure Documentation.

Evolution From Character Recognition to Understanding

1974 marked the initial deployment of machine-readable zones on checks using template matching algorithms. The 1990s introduced statistical methods enabling font-independent recognition. By 2012, deep learning architectures surpassed 95% accuracy on standardized benchmarks. The 2022 breakthrough introduced end-to-end multimodal training, where models learn semantic relationships between text and visual elements simultaneously. Current generation Large Vision Models process documents within comprehensive conversational contexts OpenAI Research.

Capabilities and Limitations

Systems excel at clean typography and standard layouts but face persistent challenges with cursive handwriting, low-contrast backgrounds, and degraded historical documents. Medical prescription interpretation remains problematic due to abbreviations and stylistic variations. Amazon Rekognition addresses specific verticals through custom model training capabilities Amazon Rekognition. Privacy concerns emerge regarding the processing of sensitive documents through third-party APIs, driving interest in on-premise and federated implementations.

Market Impact and Accessibility Revolution

Financial institutions report 70% reduction in manual data entry costs following deployment. Healthcare systems utilize the technology for digitizing legacy patient records and processing insurance claims. The most profound impact appears in accessibility services, where real-time text extraction enables screen readers to interpret environmental text, signage, and physical documents. IBM Research indicates significant advances in assistive technology applications IBM Computer Vision Research. Educational institutions leverage these tools for converting printed archives into searchable digital repositories Digital Transformation Tools.

Expert Perspectives

“We are witnessing the transition from optical character recognition to optical understanding. The technology no longer merely transcribes text but comprehends document structure, hierarchy, and semantic intent.”

— Dr. Sarah Chen, Computer Vision Lead, Stanford HAI

“The integration of vision transformers with OCR has eliminated the artificial boundaries between document analysis and natural language processing. This convergence enables entirely new interaction models.”

— Research Team, Hugging Face Hugging Face OCR Space

Strategic Implications

Organizations implementing these solutions must evaluate latency requirements against accuracy needs. Cloud-based APIs offer superior performance for complex documents but introduce dependency risks. Open-source alternatives like Tesseract provide viable options for standardized forms and high-volume batch processing. The technology fundamentally alters document management workflows, reducing archival costs while enabling full-text search capabilities across previously inaccessible content formats. Google Cloud’s continued investment in multilingual support addresses critical needs in emerging markets Google Cloud Vision.

Frequently Asked Questions

What distinguishes modern image-to-text from traditional OCR?

Traditional OCR relied on pattern matching and template-based extraction, processing characters in isolation. Contemporary systems utilize deep learning to understand context, layout, and semantics, enabling accurate extraction from unstructured documents, handwriting, and complex visual environments.

How accurate are current systems with medical or legal documents?

Domain-specific models achieve 95-98% accuracy on printed medical and legal texts. Handwritten clinical notes and historical legal manuscripts present greater challenges, typically achieving 85-92% accuracy depending on document quality and terminology complexity.

What privacy considerations apply when processing sensitive documents?

Documents processed through cloud APIs may traverse international servers and storage systems. Organizations handling PHI, financial records, or classified materials should implement on-premise solutions or ensure providers maintain SOC 2 Type II, HIPAA, or GDPR compliance certifications with data residency controls.

Can these systems operate without internet connectivity?

Yes. Edge-optimized models from Google ML Kit, Apple Vision, and TensorFlow Lite enable real-time processing on smartphones and embedded devices. While accuracy may decrease 5-10% compared to cloud-based alternatives, offline capabilities address latency and privacy requirements for field operations.